Magento 2 Case Study

Creating a Highly Loaded Infrastructure for an Online Store to Carry the Capacity during Sales

-

400k+

Facebook

Followers

-

10k+

Simultaneous

website users

-

120k+

Monthly

visitors

-

70%

Mobile

Traffic

Challenges

Our client is in the middle of migrating from Prestashop to Magento 2.3 Commerce Edition. When they start picking a hosting environment, they decide to land on Amazon Web Services (AWS) and ask us for help with the DevOps part.

The client also expects a high traffic load during the period of sales. Based on forecasts for advertising campaigns, they expect that the number of simultaneous users will reach 10,000, so we need to create a server infrastructure, which can handle the load during sales.

`

Want to work with us?

Leave your contact details and we’ll get back to you as soon as possible

Team

- DevOps Engineer

- Project Manager

- Front-End Developer

- QA Engineer

- Back-End Developer

Services provided

Solutions

Planning Stage

During the analysis of this project, it becomes clear that peak loads occasionally occur during the year, which means that we face creating a scalable environment that can adapt to the load.

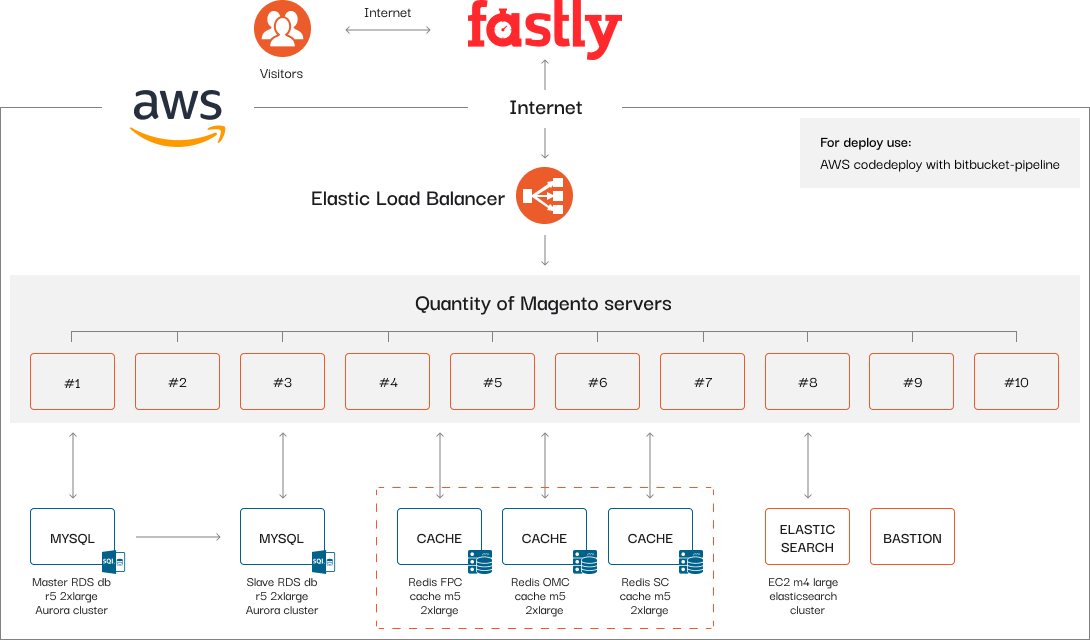

One of the critical points of this project is the high traffic that needs to be unloaded at the entry point of the infrastructure. It also requires high-performance content delivery to provide a pleasant user experience, so we choose Fastly because it’s a configurable content delivery network (CDN) that can significantly accelerate the delivery of content both cacheable and uncacheable — product prices and dynamic content.

Besides, the load also needs to be effectively shared and managed within the architecture; that’s why we consider the possibility of using several servers on which Magento can be hosted. As the infrastructure becomes multi-server then, we decide on an Elastic Load Balancer, which can easily cope with all of the tasks.

Further, the deployment process of the project takes place and consists of the Git client’s repository and the AWS code deploy with Bitbucket Pipelines, which allows automatically building, testing, and deploying the code, based on a configuration file in the repository. As a consequence, commands can be run with all the advantages of a fresh system.

Fastly CDN

is an intermediary that makes transmission of content efficient, cuts off the low-quality traffic, and protects against DDoS attacks.

Elastic Load Balancer

automatically distributes incoming application traffic across multiple targets, such as Amazon EC2 instances, containers, IP addresses, and handles the varying load of your application traffic in a single Availability Zone or across multiple ones.

There also should be a convenient way to add and remove servers when it’s necessary, and Amazon has a suitable technology — an autoscaling group, so the more servers are involved, the higher load they can carry.

Also, when working on such a high-loaded project, special attention should be paid to working with databases. We work with Amazon Aurora, which suits the conditions of the project since it’s fully managed by Amazon Relational Database Service (RDS). It automates time-consuming administration tasks like hardware provisioning, database setup, patching, and backups.

For the project, we select two powerful ones — Master and Slave with a synchronization mechanism between each other. The Master database server is where the recording process takes place, and the Slave database server is where the reading is coming from.

Another important artifact that is typical for any Magento project is caching. First, to improve the response time and reduce the load on the server, we implement a full-page cache (FPC) and object Magento cache (OMC). They’re used to display categories, products, CMS pages quickly. However, with the caching enabled, a fully-generated page can be read directly from the fast cache memory.

The next step in implementing caching is user sessions. The thing is that logged-in users significantly load the infrastructure due to the Magento specifics of working with open user sessions. We select Redis caching service, which allows inserting and retrieving a massive amount of data into its cache within a short period of time.

Amazon Aurora

is a database built for the cloud, that combines the performance and availability of traditional enterprise databases with the simplicity and cost-effectiveness of open source databases.

Redis

is a fast, open-source, in-memory key-value data store for use as a database, cache, message broker, and queue. All Redis data resides in-memory, in contrast to databases that store data on disk or SSDs.

An important and integral part of any ecommerce project is providing a fast, personalized search experience, allowing users to find relevant data quickly. The thing is that the default Magento search sometimes requires improvements and doesn’t always provide relevant search results, and it’s quite resource-consuming, which pushes us to use third-party systems. To upgrade it, we use Elasticsearch, because of its proven performance and direct access to the APIs.

This kind of infrastructure currently needs full security and minimizing the chances of the entrance from the outside. For this reason, we pick Bastion with the purpose of facilitating access to a private system from an external network. Besides, it has a multi-factor authentication and provides an extra security layer to prevent unauthorized administrative access to systems.

To understand how the infrastructure is going to perform well enough, we usually perform load testing. Our QA engineer performs the procedure, verifies the ultimate capacity, and gives feedback with the metrics.

Elasticsearch

is a popular open-source search and analytics engine for use cases such as log analytics, real-time application monitoring, and clickstream analysis.

Bastion

is a special-purpose computer on a network specifically designed and configured to withstand attacks.

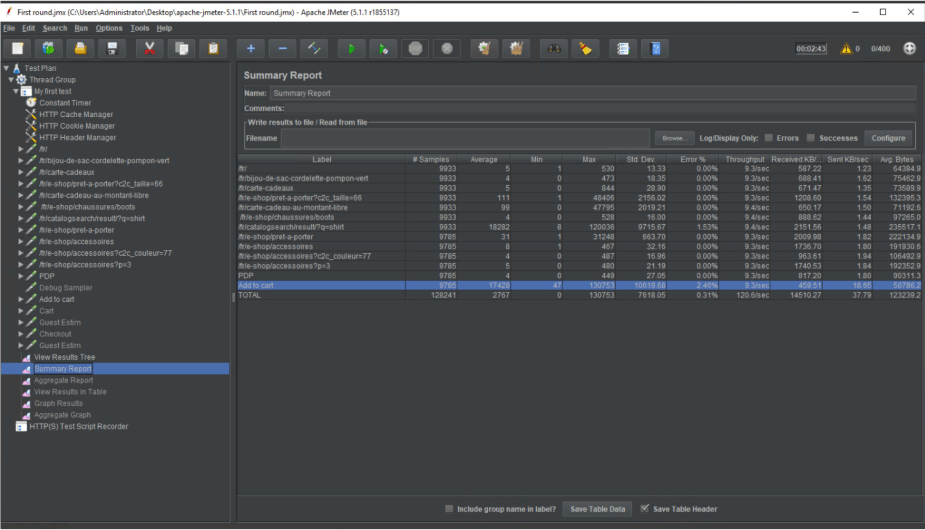

A suitable tool for performing the load testing is JMeter, which examines the server layer and discovers the maximum load a website can handle by simulating a group of users sending requests to a target server.

We usually combine the output of JMeter with New Relic to make the testing performance perfect. It analyzes how the code performs, indicates the problems on the Magento side, and offers in-depth and robust reporting.

We visualize the architecture in the scheme below and make calculations of all of the specified tools for the client.

JMeter

is a load testing tool for analyzing and measuring the performance of services.

New Relic

is a performance management tool that helps analyze and manage application performance, troubleshoots errors, and bottlenecks.

Optimization & Execution Stage

After getting the client’s approval, we set up the project execution stage and start with Fastly CDN, Elastic Load Balancer, the deployment process with Bitbucket Pipelines, Elasticsearch, and Bastion.

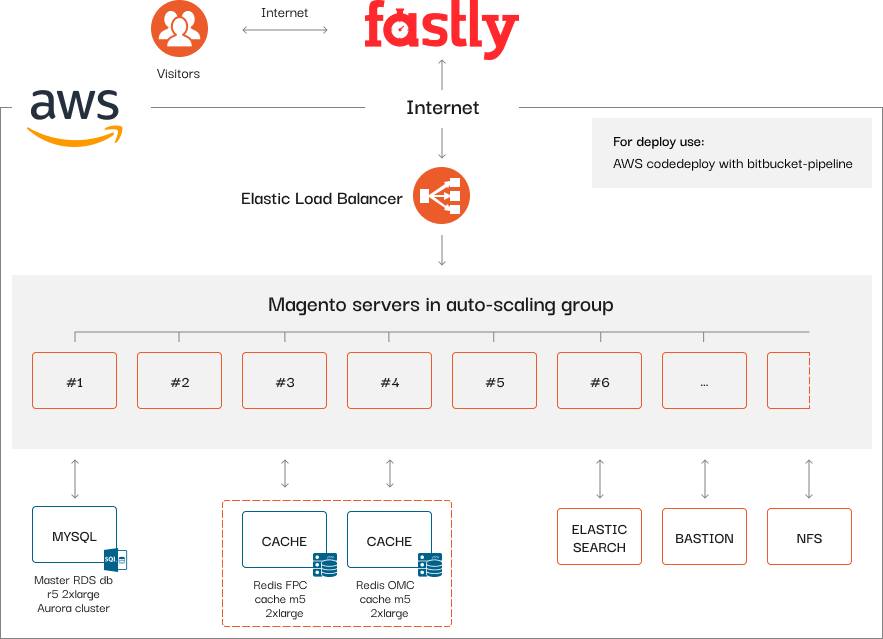

Autoscaling group is implemented with the following rule: from the moment of promotions, when the average load on the servers reaches 70%, the system provides a new server to balance the overall performance. When the demand goes under 70%, the number of servers reduces one by one.

Databases — after conducting the tests, one database server appears to be enough instead of two, so the client can save money.

Storage space is another highlight, as we find a way to optimize the infrastructure to increase the speed of content loading. We implement the Network File System (NFS) media library for storing content that doesn’t need to be duplicated on each Magento server.

Caching — when we start implementing FPC and OMC, which should be taken to separate servers to speed things up. We land on leaving one cache server for the sessions (Redis) and moving FPC and OMC to each Magento server in the autoscaling group. As a result, we save the client’s budget by reducing the number of Amazon services without loss of efficiency.

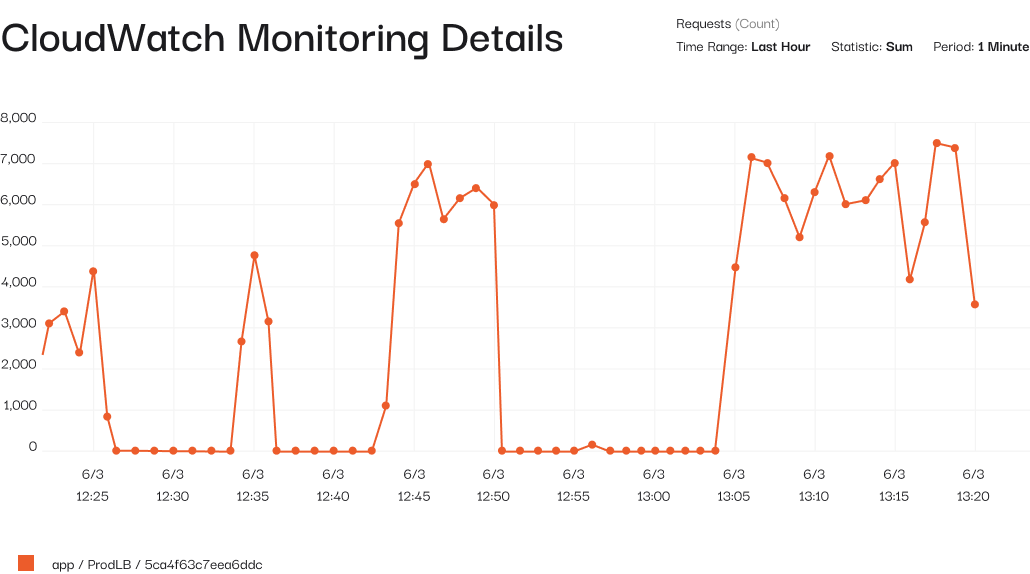

Load testing is an iterative process of putting demand on a system and measuring its response. After performing it and getting the metrics, we give instructions to the server and application parts and fine-tune the infrastructure. The intermediate results with the dynamics by the number of requests and periods can be found in the New Relic graph below. We perform the test on 10 Magento servers in the autoscaling group and reach 7,635 users. It allows us to understand the maximum capacity that the system can carry at a particular moment.

Network File System (NFS)

is a client/server application that lets a computer user view and optionally store and update files on a remote computer as though they were on the user’s own computer.

During the load testing, we follow the common user scenario, then we analyze the summary reports from JMeter, which show all the data for each stage. There we can find the bottlenecks and fix them:

Eventually, the system turns out to be even more optimal than planned:

Result

Now the online store works well when it’s loaded with 10,000 simultaneous users or more. We improved the project infrastructure while working on it and found a way to optimize the project pricing for the client and decrease the costs. Also, our client received all the described processes and instructions and had the client's team trained so that they wouldn’t have any problems in the future.